|

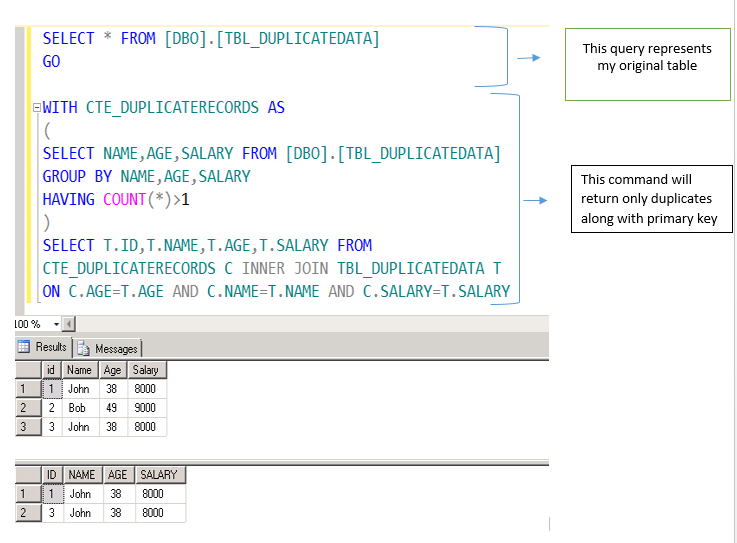

In this article, we will discuss how you can find those duplicates in SQL by using the GROUP BY and HAVING clauses.įirst, you will need to define the criteria for detecting duplicate rows. They can even cost a company money for example, an e-commerce business might process duplicated customer orders multiple times, which can have a direct impact on the business’s bottom line. Why fix duplicate values? They can mess up calculations. This could be because of human error, an application bug, or uncleaned data that’s been extracted and merged from external sources, among other things. However, you may find yourself working on a dataset with duplicate rows. It contains over 80 hands-on exercises to let you practice different SQL constructions, from simple single-table queries, through aggregation, grouping, and joining data from multiple tables, to complex topics such as subqueries.ĭatabase best practices usually dictate having unique constraints (such as the primary key) on a table to prevent the duplication of rows when data is extracted and consolidated. The best way to practice GROUP BY and HAVING clauses is 's interactive SQL Practice Set course. Learn how to find and fix duplicate values using SQL’s GROUP BY and HAVING clauses. That's a spillover from SQL Server - as is your whole approach.Duplicate records waste time, space, and money. The target of a DELETE statement cannot be the CTE, only the underlying table. How to use the physical location of rows (ROWID) in a DELETE statement How do I (or can I) SELECT DISTINCT on multiple columns? Its values can change between commands due to background processes or concurrent write operations (but not within the same command).

Or use IS NOT DISTINCT FROM to compare values (which may exclude some indexes).Ĭtid is an implementation detail of Postgres, it's not in the SQL standard and can change between major versions without warning (even if that's very unlikely). Else, depending on your definition of "duplicate", you'll need one approach or the other. Does not matter for columns defined NOT NULL. Important difference: These other queries treat NULL values as not equal, while GROUP BY (or DISTINCT or DISTINCT ON ()) treats NULL values as equal. Both should result in the same query plan. So is a self-join with the USING clause like added later.

Using EXISTS as demonstrated by is typically faster. ) is a tricky query style when NULL values can be involved, but the system column ctid is never NULL.

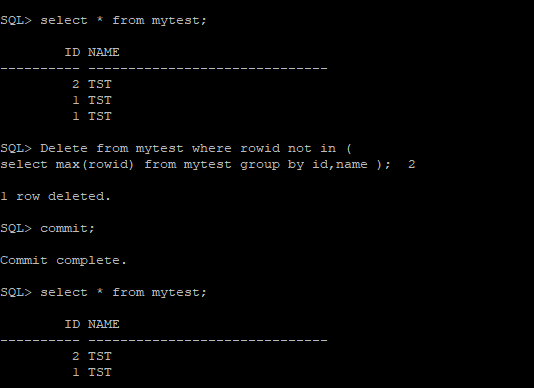

The above query is short, conveniently listing column names only once. GROUP BY name, address, zipcode) - list columns defining duplicates SELECT min(ctid) - ctid is NOT NULL by definition How do I decompose ctid into page and row numbers?.In the absence of any unique column (or combination thereof), use the ctid column: In a perfect world, every table has a unique identifier of some sort.

AND COALESCE(T1.col_with_nulls, '') = COALESCE(T2.col_with_nulls, '') Update 2: If you have NULL values in one of the key columns (which you really shouldn't IMO), then you can use COALESCE() in the condition for that column, e.g. If you rewrite the query to use IN (.) then it performs similarly to the solution presented here, but the SQL code becomes much less concise. If you don't expect many duplicates, then this solution performs much better than the ones that have a NOT IN (.) clause as those generate a lot of rows in the subquery. Update: I tested some of the different solutions here for speed. WHERE T1.ctid < T2.ctid - select the "older" ones If you want to review the records before deleting them, then simply replace DELETE with SELECT * and USING with a comma, i.e. WHERE T1.ctid < T2.ctid - delete the "older" onesĪND T1.name = T2.name - list columns that define duplicates I like 's solution, but wanted to show a solution with the USING keyword: DELETE FROM table_with_dups T1

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed